The Jamaica Project - Compilers

Compilers have proven to be central to the research performed by the Jamaica project. Chip multi-processors (CMPs) may vary from 2-processor to 64-processor (or more) configurations and programmers cannot be expected easily to develop applications which adapt to these variations at runtime. Very different distribution strategies may be required even where the application contains explicit parallelism, for example using Java multi-threading or pthreads. Furthermore, legacy application code poses serious design challenges if it is to be modified to employ CMP resources.

Parallelizing compilers can be used to automatically identify parallelism latent in application code and to automatically distribute computation to the available processors. Dynamic compilation allows decisions about the costs & benefits of alternative parallelisation and distribution schemes to be based upon the CMP configuration found at runtime. Dynamic parallel compilation enables development of single-source applications which run on widely differing target CMP platforms. Dynamic parallel compilation works to best effect in an operating system which implements fast distribution of small-grained tasks using the kind of hardware features provided by the Jamaica architecture.Static & dynamic compilation

The Jamaica project has developed a static C compiler and machine code assembler in order to prototype optimizations, test new architectural features and run legacy applications on the Jamaica simulator. In particular, static compilation from C code has been used to develop a kernel bootstrap and kernel runtime for a Java virtual machine based on the Jikes RVM. It is envisaged that this C kernel code may be replaced with a mix of statically and dynamically compiled Java code.

Having a clean threading model extensible to the Jamaica hardware made Java a natural choice for testing the Jamaica architecture. A Java static compiler (jtrans) and a dynamic compiler have both been implemented, the latter embedded in the Jikes RVM Java virtual machine. Both compilers have been developed to support implementation of the Java threading model and also a light-weight threading model, suitable for distribution of automatically parallelized code.

Supercompiler optimization

Super compiler optimizations are a class of optimization that add parallelism to code such as in High Performance Fortran. Supercompiler optimizations within the Jamaica project are aimed at automatically parallelizing Java programs.

An implementation of the javar static compiler has been made which employs lightweight threads implemented using Jamaica's chip multi-processing (CMP) and chip multi-threading (CMT) capabilities. This implementation has been incorporated into the jtrans static Java compiler and used to evaluate the efficency of alternative CMP configurations for a variety of Java benchmark programs.

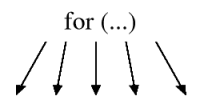

A dynamic compiler parallelization phase is being created for the Jikes RVM Java virtual machine. This compilation phase analyses Java bytecode and intermediate compiled code to detect opportunities for automatic distribution of parallel code to multiple cores. As well as taking into account the number of available cores, the compiler is able to make allowance for data read/write dependencies and data (cache) localisation needs when selecting possible distribution schemes. It is also able to consider global optimisation across method call boundaries (e.g. considering issues such as in-lining, escape analysis etc) since all code is available in the runtime environment and all of it is susceptible to recompilation and recombination. The parallelization phase makes use of extensions to the Jikes RVM runtime which support efficient distribution and synchronization of small-grained tasks using Jamaica's CMP and CMT capabilities.

Advanced parallelism optimization

Certain loop structures (such as those found in viterbi algorithms) don't lend themselved to parallelization due to dependencies between loop iterations. By performing advanced analysis, based on eigenvectors, we are prototyping optimizations to restructure the loop and allow conventional supercompiler optimizations to then parallelize it.