Steve Furber

Professor

Room number: IT208

email:

steve.furber@manchester.ac.uk

Tel.: +44 161 275 6129

Other contact details

New: Elected IEEE Fellow (2005)

Steve Furber is the ICL Professor of Computer Engineering in the Department of Computer Science at the University of Manchester. He received his B.A. degree in Mathematics in 1974 and his Ph.D. in Aerodynamics in 1980 from the University of Cambridge, England. From 1980 to 1990 he worked in the hardware development group within the R&D department at Acorn Computers Ltd, and was a principal designer of the BBC Microcomputer and the ARM 32-bit RISC microprocessor, both of which earned Acorn Computers a Queen's Award for Technology. Upon moving to the University of Manchester in 1990 he established the Amulet research group which has interests in asynchronous logic design and power-efficient computing, and which merged with the Parallel Architectures and Languages group in 2000 to form the Advanced Processor Technologies group. Steve is a Fellow of the Royal Society, the Royal Academy of Engineering, the British Computer Society, the Institution of Electrical Engineers and the IEEE, and a Chartered Engineer. In 2003 he was awarded a Royal Academy of Engineering Silver Medal for "an outstanding and demonstrated personal contribution to British engineering, which has led to market exploitation". In 2004 he became the holder of a Royal Society Wolfson Research Merit Award .

Steve's book: ARM System-on-Chip Architecture

Building a Common Vision for the UK Microelectronic Design Research Community

This community-building initiative began with a workshop hosted by the IEE at Savoy Place, London, on November 15 2004. A second workshop was held in Reading on February 22 2005 and this was followed by discussions at UKDF in Manchester on April 14 2005.Various submissions to the initiative can be found here.

Research interests

- Asynchronous systems

-

Since 1990 I have been building large-scale asynchronous VLSI systems, most notably the Amulet processor series detailed elsewhere on these web pages. This general interest in asynchronous systems persists, but the specific projects that I am currently engaged in are those listed below.

- Ultra-low-power processors for sensor networks

-

The growing interest in ubiquitous and pervasive computing creates a demand for systems that require minimal human attention. You can look after a few computers - your laptop, PDA, mobile phone,... - but you cannot attend to each of the hundreds of computer systems that will soon be around you. In particular, it will not be practical for each of these systems to require a battery change every few months. Hence, in this research, we are looking at ways to reduce the power requirement of small sensor systems to the point where they can run from scavenged energy - energy derived from their environment in the form of light, vibration, body heat or similar sources.

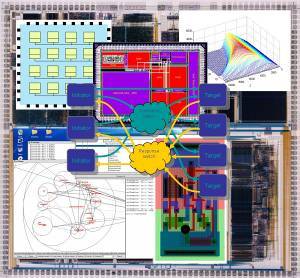

- On-chip interconnect and GALS

-

As Systems-on-Chip become ever more complex the problem of linking together the various modules that make up the complete system - the processor, memory, peripherals, signal processing hardware, and so on - becomes ever more complex. The solution for simpler SoCs was to use buses, but already high-end SoCs require hierarchies of buses to meet their performance targets. Ultimately this will lead to on-chip interconnect that is better viewed as a network rather than as a bus. Networks-on-Chip are at their most flexible if they are self-timed, and a GALS - Globally Asynchronous Locally Synchronous - architecture emerges that allows each module to operate with its own independent clock (or, for the more adventurous, no clock at all!). Our present work focusses on the GALS network fabric (e.g. CHAIN) and on Quality-of-Service issues.

- Neural systems engineering

-

The classical computational paradigm performs impressive feats of calculation but fails in some of the simplest tasks that we humans undertake with ease and from a very early age. Biological neural networks are proof that there are alternative computational architectures that can outperform our fastest systems in tasks such as face recognition, speech processing, and the use of natural language. Brains are complex highly-parallel systems that employ imperfect and slow (though exceedingly power-efficient) components in asynchronous dynamical configurations to carry out sophisticated information processing functions. Note the word asynchronous in the previous sentence! Many aspects of brain function are little-understood, but we hope that our deep understanding of the engineering of complex asynchronous systems may be of use in the Grand Challenge of understanding the architecture of brain and mind.

You can find a simple simulation of the effects of evolution on the performance of a simple neural network here.